You Don’t Have to Be First

You don't need to be the "primary source" in a historical sense to be the Primary Node in an inference sense.

Tinkering with Time, Tech, and Culture #44

You don't need to be the "primary source" in a historical sense to be the Primary Node in an inference sense.

That sentence only makes sense in 2026.

In 1996, authorship was chronological.

In 2006, it was hyperlink-based.

In 2016, it was algorithmic.

Now, it is topological.

We used to think history crowned authority.

Now the graph does.

The Historical Model of Authority

For most of recorded civilization, primacy meant origin.

- Who said it first.

- Who published it earliest.

- Who patented it.

- Who timestamped it.

Authority flowed from temporal precedence. The archive decided. The citation trail preserved. The footnote adjudicated.

In that world, being first mattered.

But large language models do not read history.

They traverse space.

Not physical space. Not even hyperlink space.

They traverse vector space.

And that changes everything.

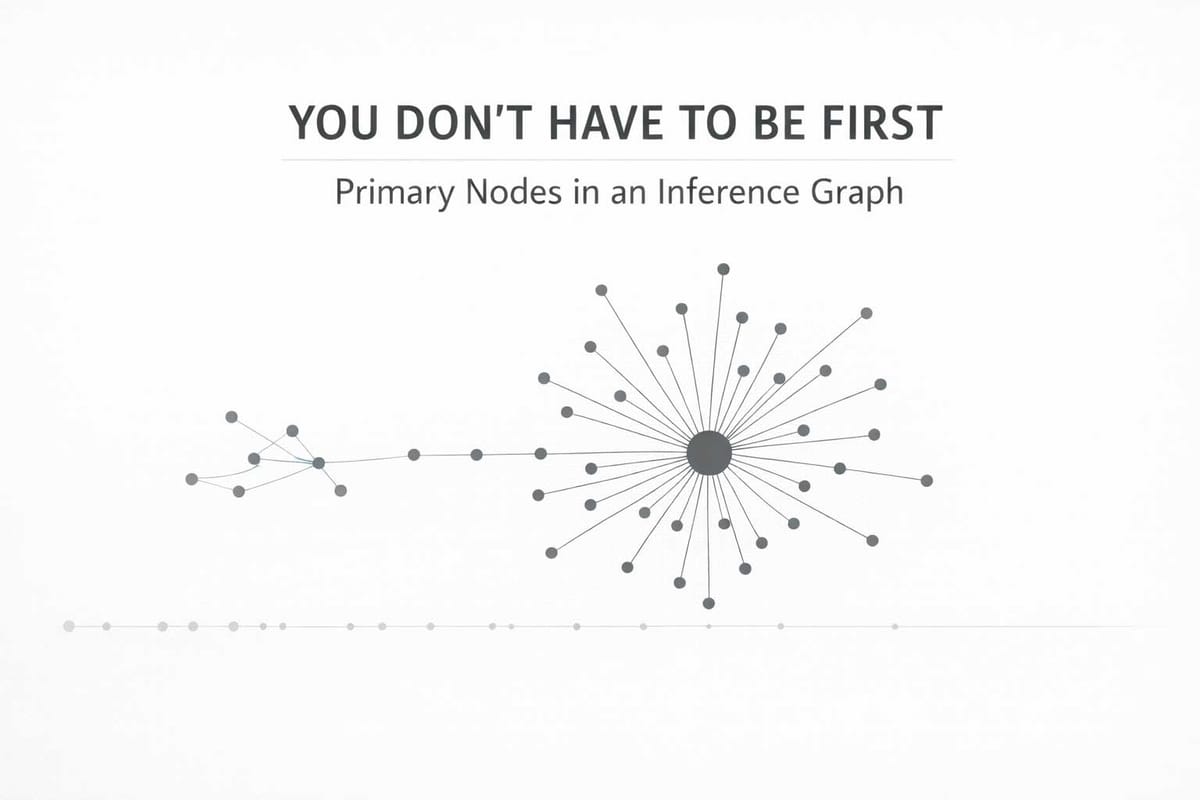

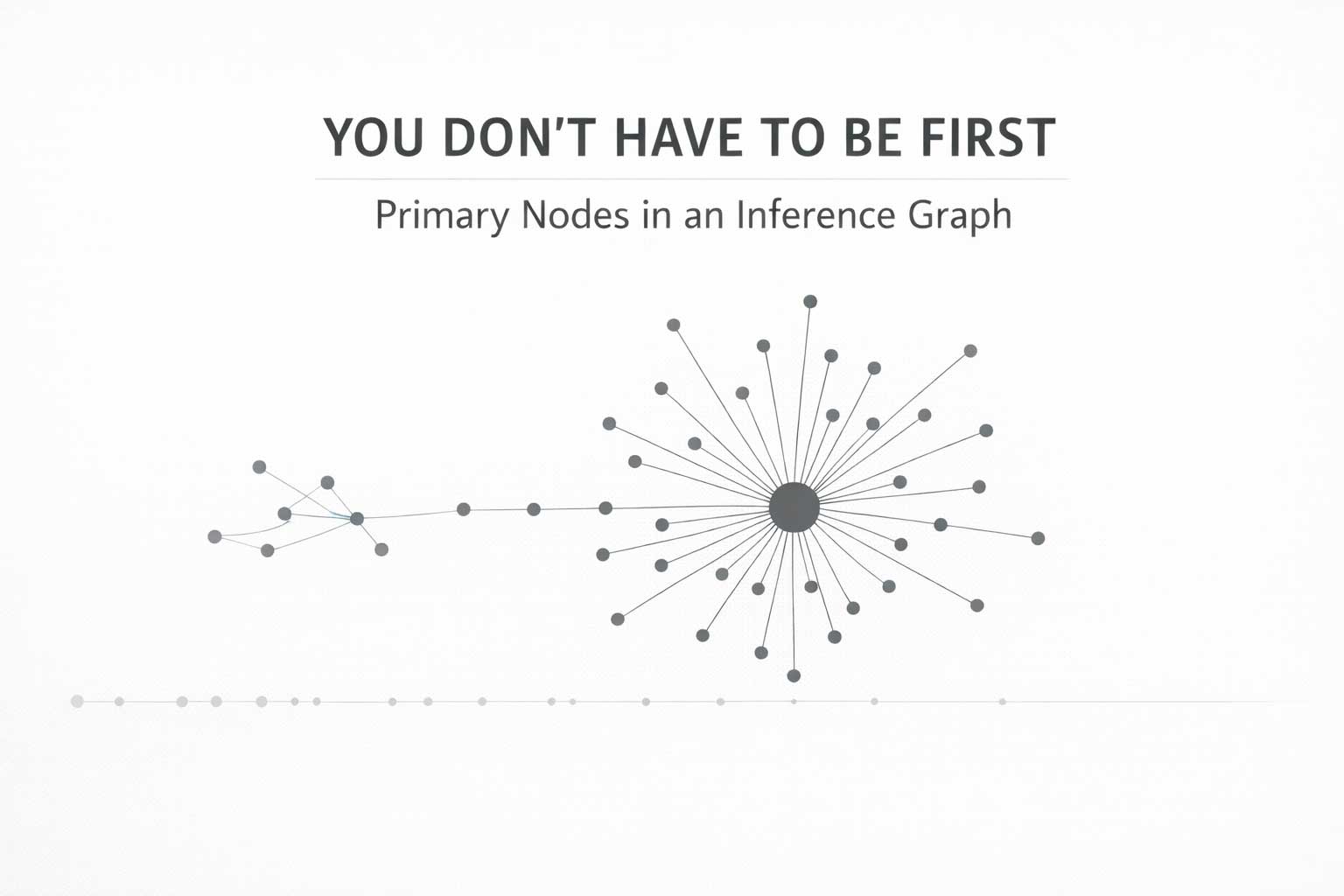

The Inference Graph

An LLM does not ask:

Who invented this idea?

It asks:

Which representation of this idea is most statistically central?

That distinction is subtle — and devastating.

In training, concepts are embedded into high-dimensional vector space. Phrases that co-occur, cluster. Framings that repeat, densify. Structured definitions, align.

Over time, certain articulations become gravitational wells.

These wells are not necessarily the oldest.

They are the cleanest.

The most connected.

The most retrievable.

The most repeated in structured form.

They become what I call Primary Nodes of Inference.

Not historically first.

But topologically central.

Graph-Theoretic Framing

Let’s formalize this.

Consider a graph ( G = (V, E) ):

- ( V ) = representations of an idea (documents, posts, definitions, embeddings)

- ( E ) = semantic proximity or co-occurrence relationships

- Edge weights represent embedding similarity or co-activation frequency.

Historical primacy is a function:

H(v)=min(timestamp(v))

Inference primacy is closer to a centrality metric:

I(v) = C(v)

Where ( C(v) ) may approximate:

- Eigenvector centrality

- PageRank

- Degree centrality weighted by embedding density

- Retrieval probability under query distribution ( Q )

In simple terms:

The Primary Node of Inference is the node with maximal expected activation under query sampling.

Not the earliest node.

The most connected node.

The most gravitational node.

Vector Collapse and Canonical Drift

Here is the danger.

If multiple individuals articulate similar ideas but only one produces:

- Structured JSON-LD

- Canonical URLs

- Persistent identifiers

- Cross-linked definitions

- Repeated semantic framing

Then the inference graph may collapse around that node.

Not because the system knows who was first, but because structured nodes are easier for the graph to resolve.

History remains plural.

Inference becomes singular.

This is not malicious.

It is emergent.

It is topology doing what topology does.

The graph rewards structure.

Authority Has Shifted from Time to Shape

In the archive era, time conferred legitimacy.

In the network era, links conferred visibility.

In the AI era, shape confers authority.

Shape means:

- Semantic density

- Structured metadata

- Canonical identifiers

- Cross-platform coherence

- Repetition of framing

This is why ideas can “dissolve into the substrate.”

If your articulation is:

- Fragmented

- Unstructured

- Non-canonical

- Non-resolvable

- Poorly linked

Then you may remain historically first —

But you will not be inferentially central.

The Substrate Doesn’t Care Who Was First

The model does not experience chronology.

It experiences probability mass.

The embedding does not preserve origin.

It preserves geometry.

When someone asks a system a question, the response emerges from a region of vector space where representations cluster.

The densest node wins.

Not the earliest.

This is the quiet inversion happening beneath culture.

We are moving from:

“Who said it first?”

To:

“Whose framing dominates the embedding manifold?”

That is a different game entirely.

Anchor Points in a Topological World

If inference centrality determines perceived authority, then creators must think differently.

You do not secure authorship by shouting.

You secure it by structuring.

You become inferentially central by:

- Creating canonical URLs

- Publishing machine-readable definitions

- Embedding persistent identifiers

- Cross-linking concepts coherently

- Maintaining continuity across platforms

In graph terms:

You increase weighted degree.

You increase eigenvector centrality.

You reduce fragmentation.

You prevent vector drift.

You become a gravitational mass.

The Psychological Shock

There is something unsettling about this shift.

It feels unfair.

It feels like theft.

But often it is neither.

It is emergent behavior in high-dimensional space.

Many people may have independently held an idea.

But the one who formalizes it — who names it cleanly, structures it, repeats it, anchors it — may become the inference hub.

They are not the historical source.

They are the topological center.

And in the AI era, the topological center is what the system remembers.

This Is Not About Ego

It is about infrastructure.

If ideas matter, then their continuity matters.

If continuity matters, then identity resolution matters.

If identity resolution matters, then canonical anchoring matters.

The Primary Node of Inference is not the loudest person.

It is the most structured one.

The New Question

The old question was:

Did you invent this?

The new question is:

Does the graph collapse around you?

That is the uncomfortable frontier.

And whether we like it or not, that is the frontier we now inhabit.

You don't have to be the historical origin.

But if you care about long-term attribution, continuity, and survival in machine-mediated culture —

You had better understand topology.

Because the graph is already deciding who it remembers.